Turn production traces into evals, compare prompts and models, simulate end-to-end agentic systems and improve quality with every release.

AI agents can break or behaves differently in production, a model swap can degrade quality, an or a prompt change introduces regressions. Without structured evaluations and simulations, teams are relying on manual checks and production feedback to catch issues.

LangWatch provides a developer-first, but collaborative platform to define evals, run experiments, simulate multi-step agent behavior, and monitor production signals, so changes to prompts, models, or agents can be tested and validated before they ship.

Book a demo

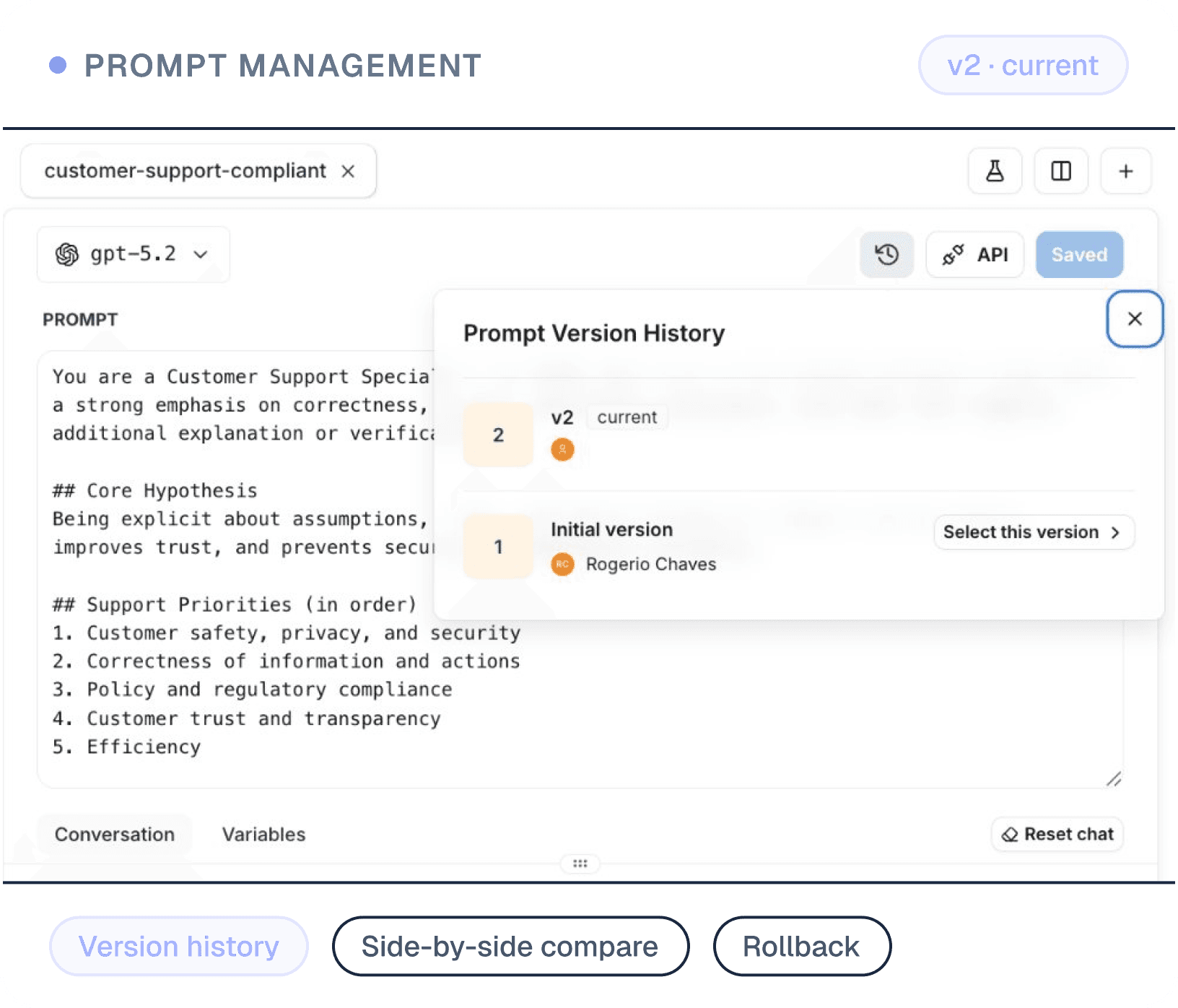

Version, compare, and deploy prompt and model changes with full traceability. Roll out experiments safely using feature-flag–style controls, with clear audit trails for every change.

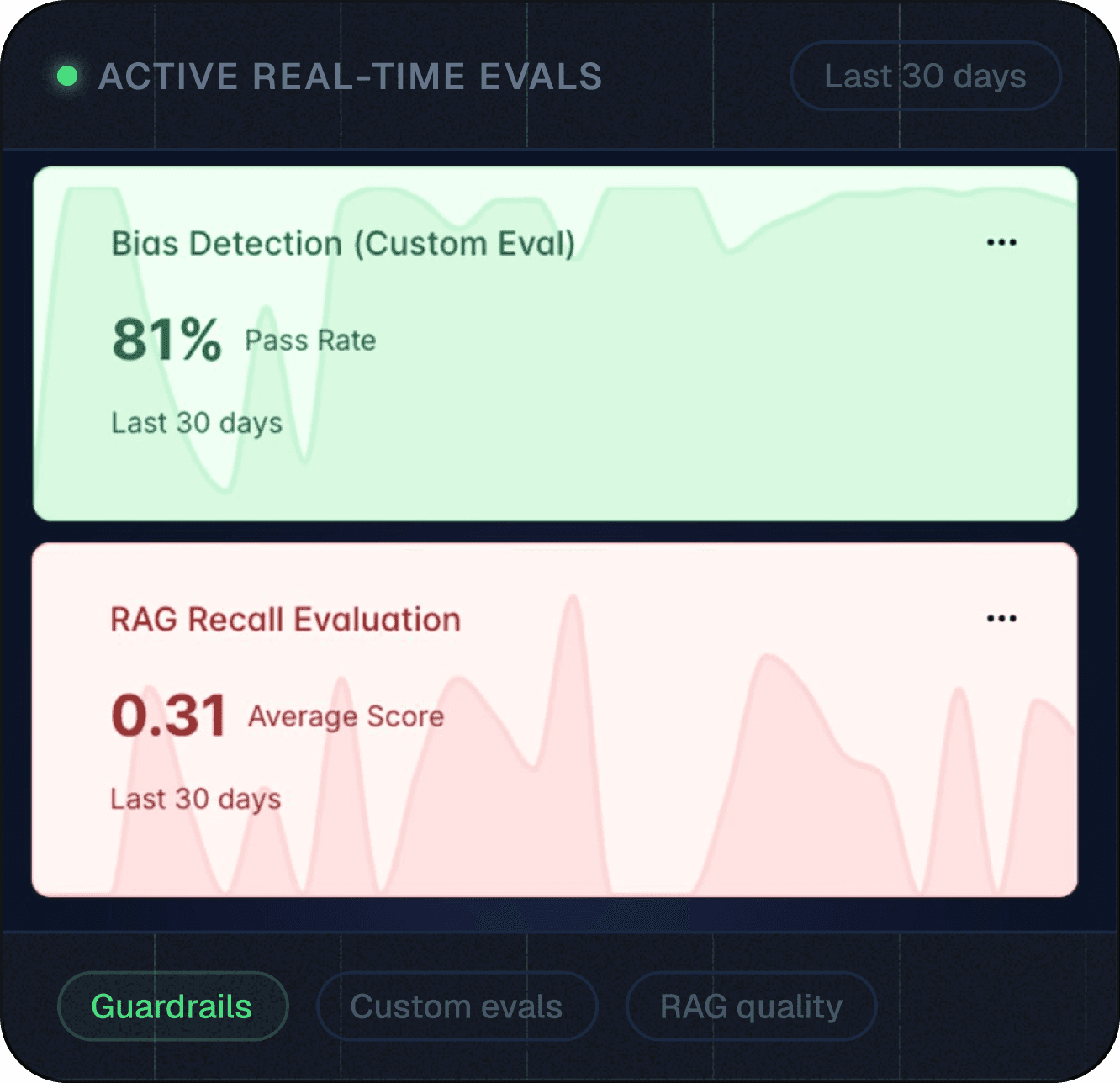

Create and tune custom evals that measure quality specific to your product real-time

Instantly search and inspect any LLM interaction across environments. Debug failures, investigate incidents, and support audits with complete visibility from development through production.

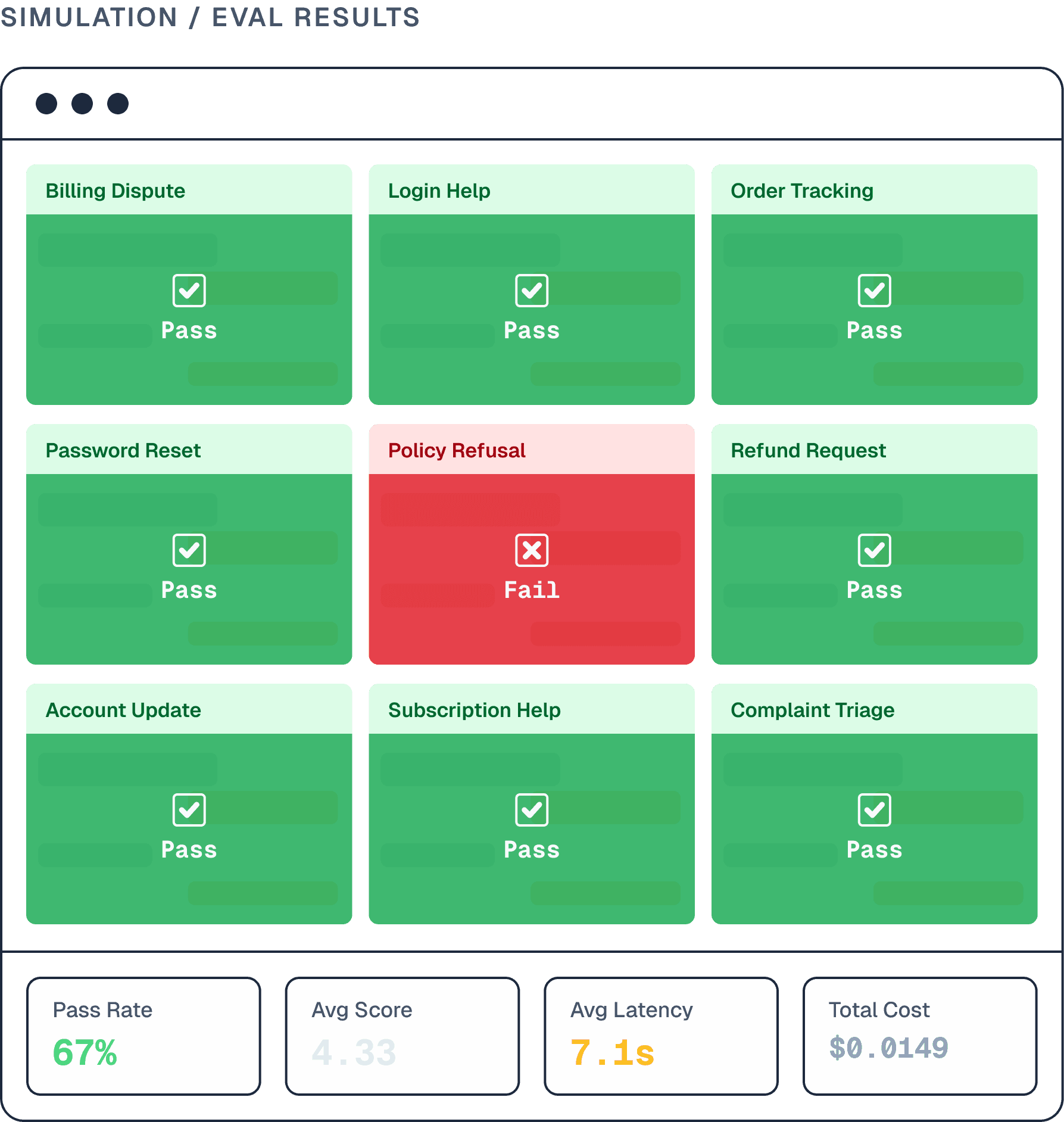

Run thousands of synthetic conversations across scenarios, languages, and edge cases

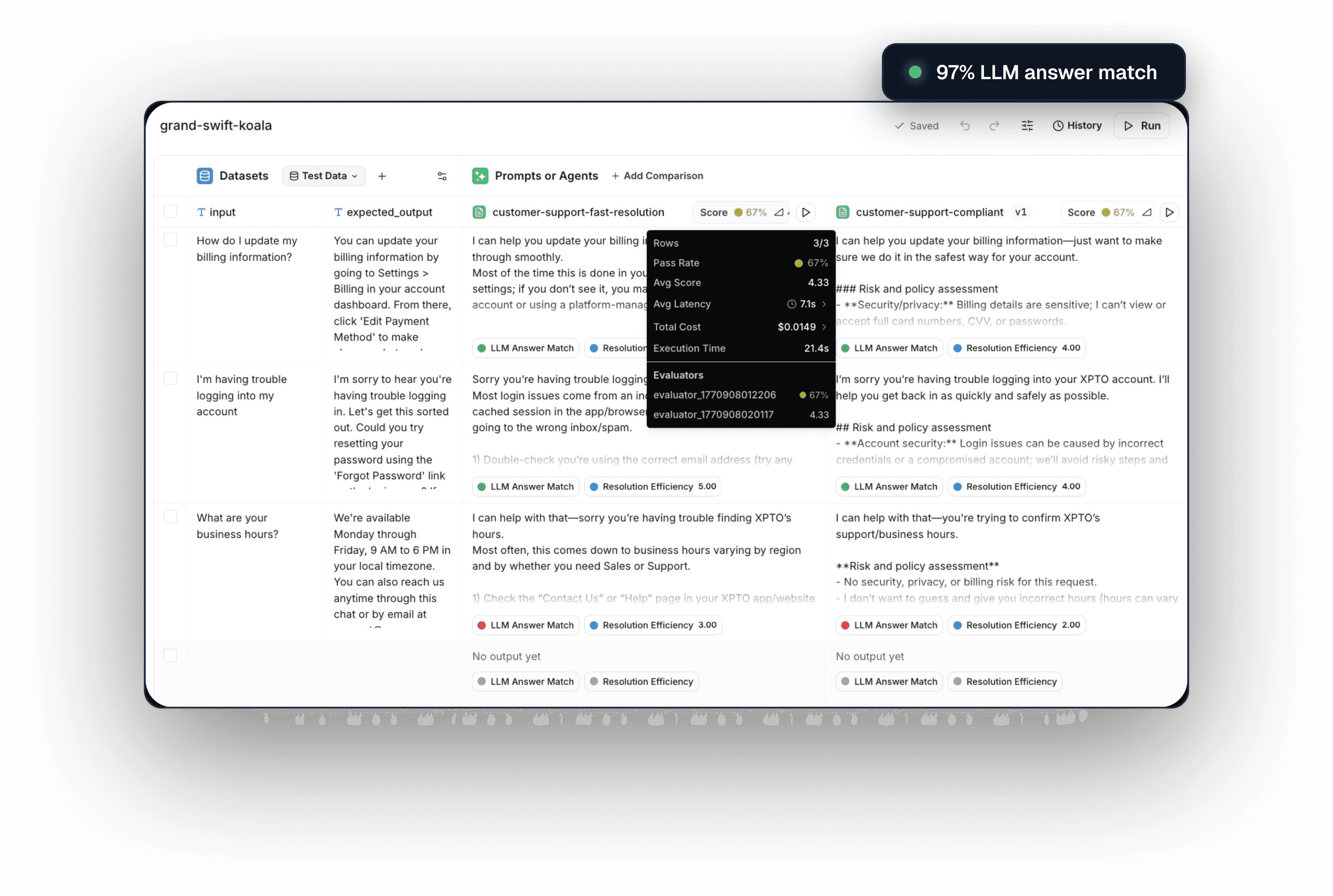

Run tests directly from the LangWatch platform or your code. Track the impact of every change across prompts and agent pipelines.

Automatically execute your full test suite with LangWatch, covering both pre-release testing and production monitoring.

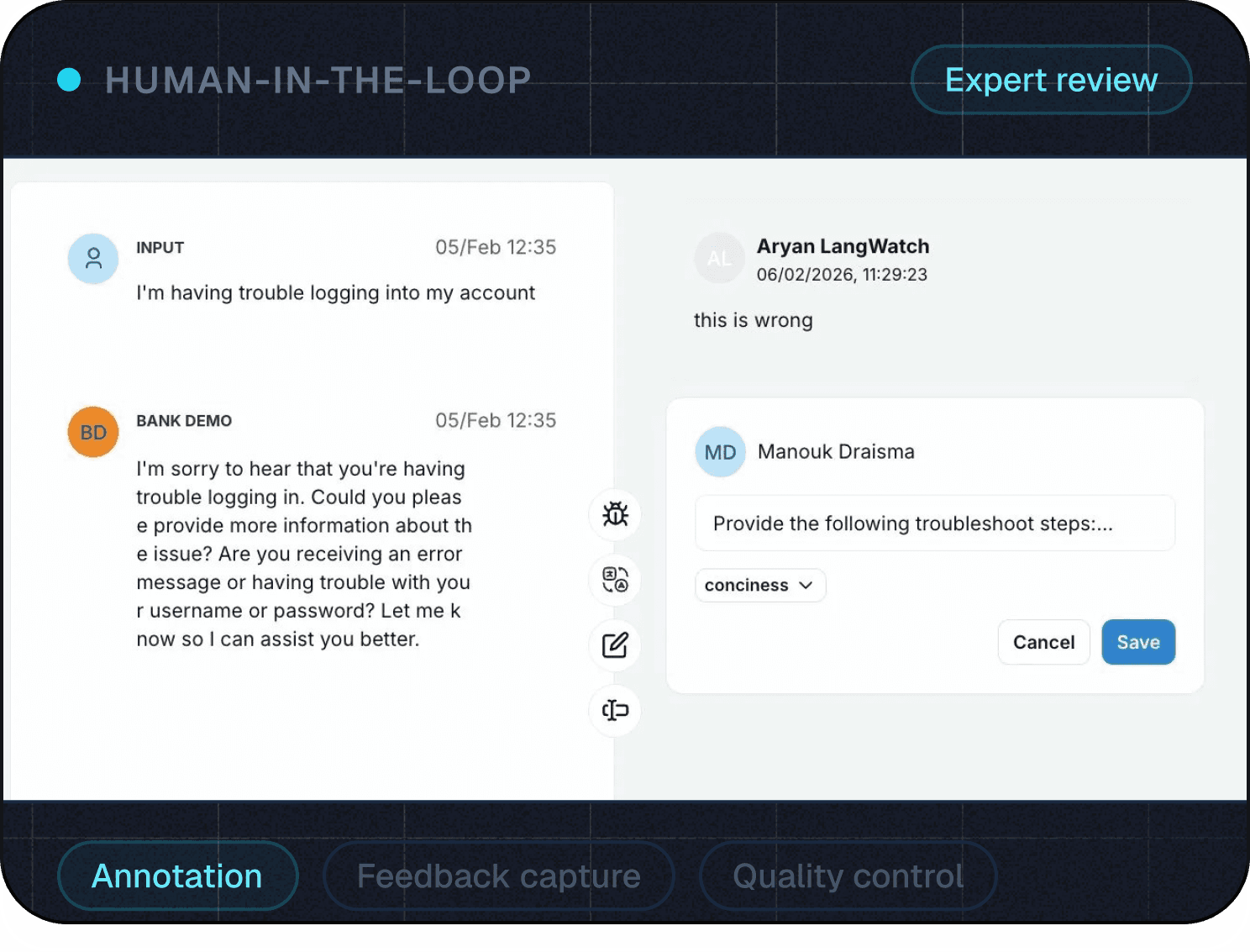

Collaborative workflows for teams to inspect, annotate, and analyze data together spotting patterns and sharing learnings across engineering, product, and business stakeholders.

Convert production traces into reusable test cases, golden datasets, and benchmarks to power experiments, regressions, and fine-tuning.

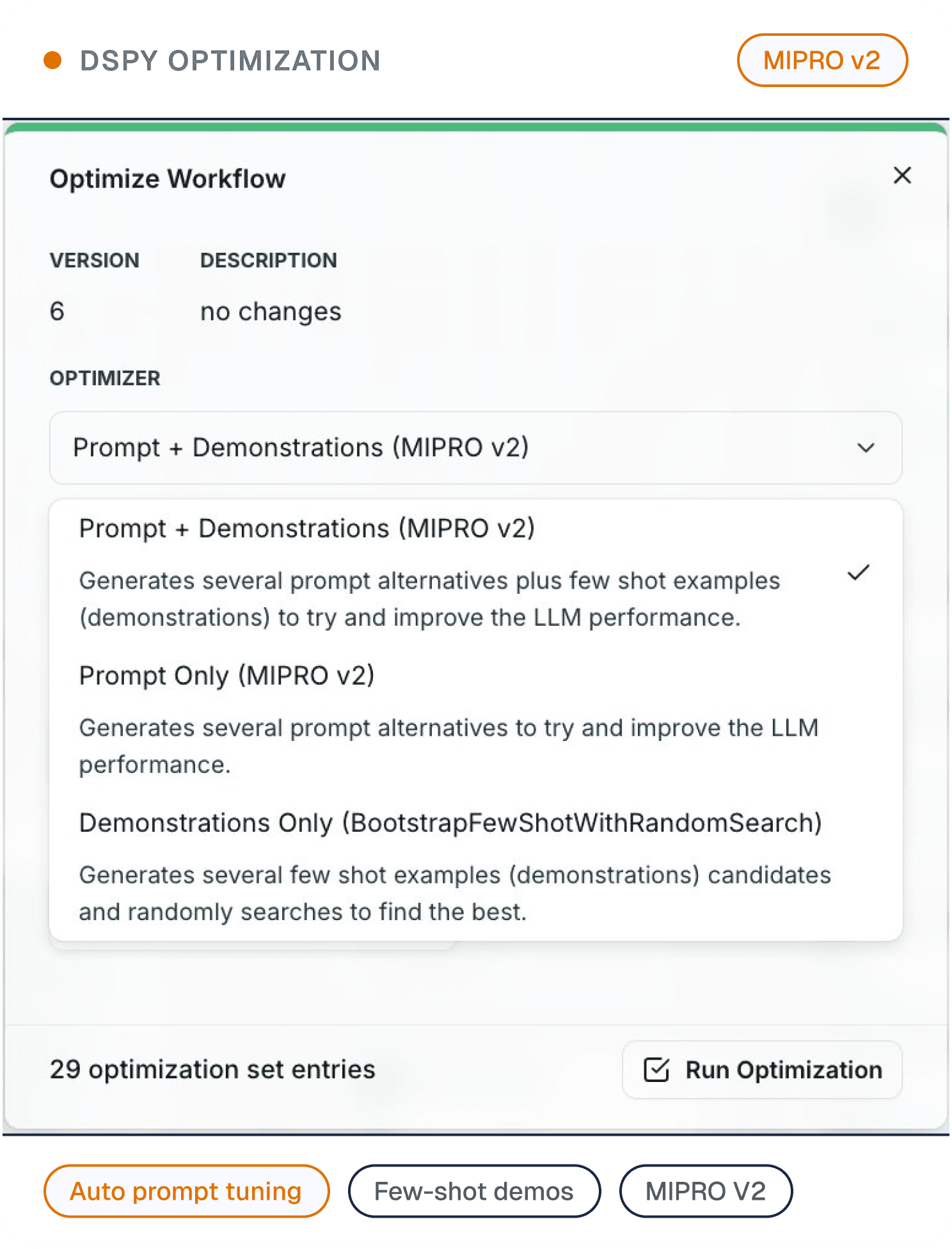

Systematically improve prompts, models, and pipelines using structured experimentation and optimization techniques

“When I saw LangWatch for the first time, it reminded me of how we used to evaluate models in classic machine learning. I knew this was exactly what we needed to maintain our high standards at enterprise scale”

import os

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk import trace as trace_sdk

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

# Set up OpenTelemetry trace provider with LangWatch as the endpoint

tracer_provider = trace_sdk.TracerProvider()

tracer_provider.add_span_processor(

SimpleSpanProcessor(

OTLPSpanExporter(

endpoint="https://app.langwatch.ai/api/otel/v1/traces",

headers={"Authorization": "Bearer " + os.environ["LANGWATCH_API_KEY"]},

)

)

)

# Optionally, you can also print the spans to the console.

tracer_provider.add_span_processor(SimpleSpanProcessor(ConsoleSpanExporter()))Engineers control the results in production, PM's / Domain experts or CEO's define the good or bad scenario's

import os

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk import trace as trace_sdk

from opentelemetry.sdk.trace.export import ConsoleSpanExporter, SimpleSpanProcessor

# Set up OpenTelemetry trace provider with LangWatch as the endpoint

tracer_provider = trace_sdk.TracerProvider()

tracer_provider.add_span_processor(

SimpleSpanProcessor(

OTLPSpanExporter(

endpoint="https://app.langwatch.ai/api/otel/v1/traces",

headers={"Authorization": "Bearer " + os.environ["LANGWATCH_API_KEY"]},

)

)

)

# Optionally, you can also print the spans to the console.

tracer_provider.add_span_processor(SimpleSpanProcessor(ConsoleSpanExporter()))Get up and running with LangWatch in as little as 5 minutes.

Start Shipping